The most successful AI work in a small business is the work nobody notices. No tell-tale em-dashes scattered like confetti, no opening paragraphs that “delve into the multifaceted landscape” of anything, no suspiciously symmetrical bullet lists with three items of identical length. The goal is not to broadcast that a machine helped — it is to produce a quote, a stock report, a customer reply or a product description that reads as if a thoughtful human did it on a quiet Tuesday afternoon. If your customers compliment the work without spotting the prompt, you have done it correctly. If they wince at the cadence and ask whether you have been “using that ChatGPT thing”, you have not.

This piece is the foundations: the small set of ideas that, once understood, make every other AI decision easier. It is deliberately practical, written for the owner of a five-to-fifty person business who would rather spend the afternoon doing the work than reading another think-piece about the future of work.

The Base Technology Stack

Almost every AI product on the market is built from the same handful of components. Knowing the parts — rather than the brand names — is the single biggest upgrade to your decision-making.

- Large language models (LLMs) — the text engines. Everything from short replies to whole reports.

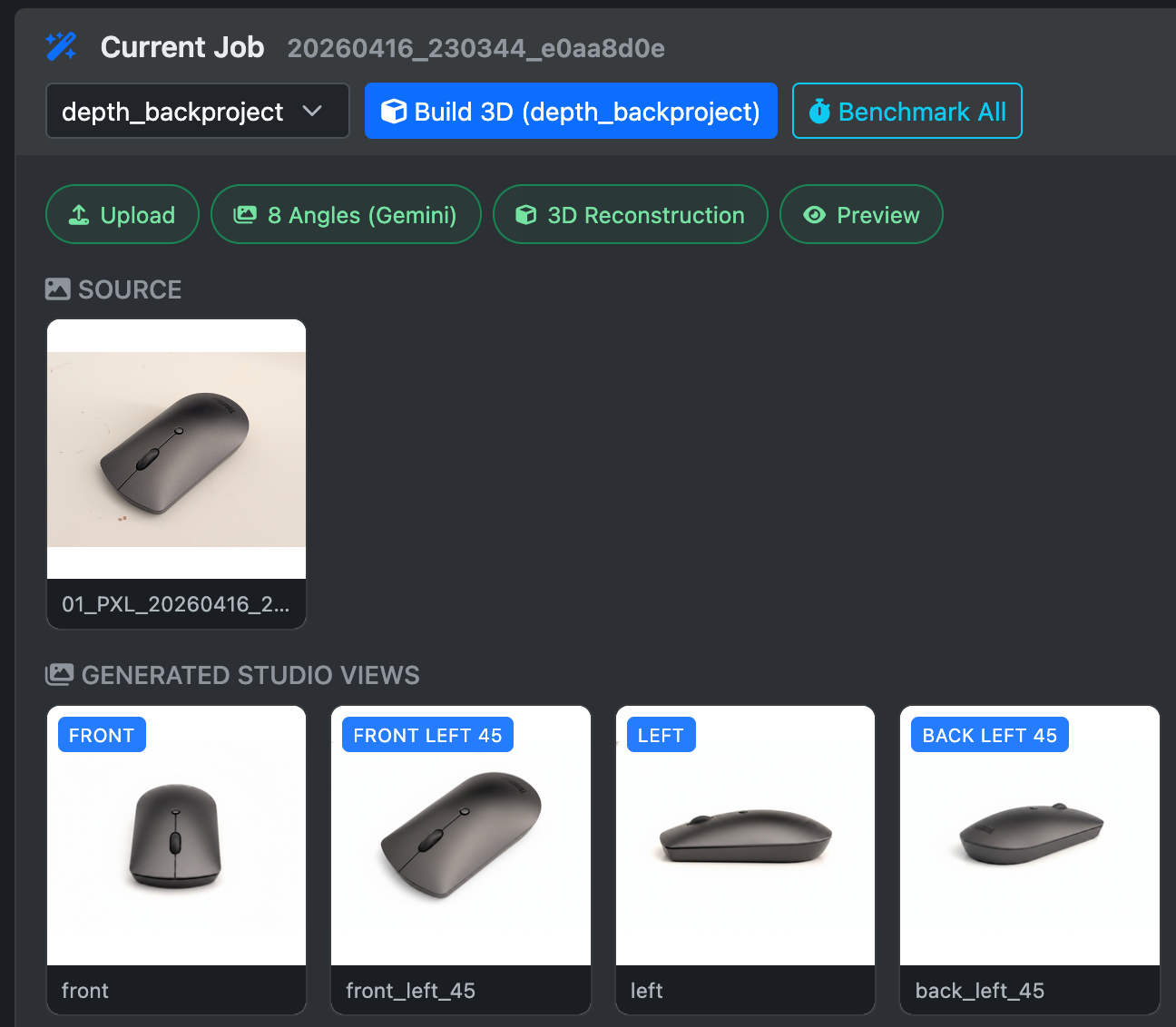

- Diffusion / image models — generate or edit images and graphics.

- Vision models — read images: a photo of a delivery note, a screenshot, a product on a shelf.

- Audio models — transcribe calls, clone voices, generate speech for IVR or video.

- Embedding models — turn text into numbers (vectors) so you can search by meaning rather than keyword. The magic behind “find me invoices that look like this one”.

- Tool calling — the model is allowed to invoke functions: read a file, query a database, send an email.

- Memory — somewhere persistent for the model to recall past sessions, customer preferences, or running notes.

Active tokens, mixture-of-experts, model size — you will see these terms thrown around. They mostly tell you how clever and how expensive a particular model is. A 70-billion parameter model is roughly a clever graduate; a 4-billion parameter model that fits on a Mac mini is a bright sixth-former who is fast and cheap and forgets things. Pick the smallest model that does the job — bigger is not always better, and is always more expensive.

Two terms worth knowing properly. Mixture-of-experts (MoE) is a clever architecture where a very large model is internally split into dozens of sub-models, and only a couple are consulted for any given token. A 200 billion-parameter MoE model might only activate 20 billion at a time — you get the breadth of a huge model at the speed and memory cost of a much smaller one. Most modern flagship models (Claude, Gemini, GPT, Qwen) use this trick. Active tokens is shorthand for that activated slice: the bit actually doing the work on each word. When somebody quotes a model as “235B/22B” they mean 235 billion parameters total, 22 billion active per token. The active number is the one that matters for hardware and cost.

Harnesses vs the Underlying Technology

This is the single most useful mental model in the field. The thing you talk to (ChatGPT, Claude, Cursor, your bespoke script) is a harness. The harness wraps a model, gives it tools, hands it memory, and renders the result. Different harnesses on the same model produce wildly different experiences.

So when somebody says “I tried Claude and it was rubbish”, the more useful question is which harness, which model, which task. The Claude consumer chat app, Claude Code in the terminal, and a bespoke Anthropic API call from your own PHP script all behave very differently — even though the underlying model may be identical.

Best-in-Class, in 2026

The leaderboards will move again before this paragraph is six months old, but the broad strokes are stable enough to plan around:

| Provider | Best For |

|---|---|

| Anthropic (Claude) | Code, structured writing, long careful documents, agentic harnesses |

| Google (Gemini, nano-banana) | Vision-heavy tasks, very large contexts, image generation and editing |

| xAI (Grok) | Voice interaction, fast image generation, real-time web context |

| Midjourney | Artistic image generation where taste matters more than precision |

| Gemma (local) | General-purpose local LLM and vision — the workhorse for on-device tasks |

| Chinese labs (Qwen, DeepSeek, etc.) | Small, fast, surprisingly capable on-device coding models |

| Hugging Face | Niche specialist models — OCR for receipts, audio classification, etc. |

The practical advice: pair Anthropic for the thinking, Gemini or Gemma for the seeing, and a small local model for the boring batch work. Almost every small-business workflow we build follows that division of labour.

Cloud API vs Local Inference

Cloud APIs (OpenAI, Anthropic, Google) give you the cleverest models at the cost of a per-token bill and the small risk of sending your data through someone else’s servers. Local inference — via Ollama, LM Studio, llama.cpp, or MLX on Apple Silicon — runs the model on your own hardware: no per-call cost, no data leaving the office, and a willingness to use a slightly less clever model. Ollama is the developer’s default (a single CLI command and an HTTP API on port 11434); LM Studio is the friendly Mac-and-Windows desktop app with a model browser, a chat UI, and an OpenAI-compatible local server — ideal for owners who would rather click than type.

A simple rule of thumb: if you would do it ten thousand times a month, run it locally; if you would do it ten times, use a cloud model. The break-even point is surprisingly low.

Calling a local model is easier than most people expect. Once Ollama is installed and a model is pulled, this is enough:

curl http://localhost:11434/api/generate \

-d '{

"model": "gemma3:4b",

"prompt": "Summarise this customer email in one polite sentence: ...",

"stream": false

}'

Types of AI Wrappers

Once you can see the harness as separate from the model, the market sorts itself into a tidy ladder of capability:

- Consumer chat apps — ChatGPT, Gemini, Grok. Easy, but limited features and surprisingly easy to mis-use for business work.

- Software extensions — Copilot in Office, Gemini in Google Workspace, AI inside Photoshop or Canva. Useful, but constrained to the host app.

- Standalone AI software — Claude Projects, Perplexity, NotebookLM, Claude Cowork. Better at long-running document work.

- Agentic harnesses — Claude Code, Cursor, Codex CLI, Hermes Agent, Pi Agent. The model is given tools, a terminal, and a folder of your files. This is where serious automation lives.

- Custom scripts and tools — a fixed pipeline you (or we) write once: a defined input, defined output, model in the middle. Cheapest, fastest, most reliable.

Most small businesses should be living somewhere between the last two. Chat apps are for one-off questions; the real productivity comes from harnesses that touch your files and scripts that run on a schedule.

Context Engineering and Prompting

“Prompt engineering” is the bit everyone fixates on; context engineering is the bit that actually works. The model can only reason about what it can see. If you put rubbish in, you get something articulate, confident and wrong out. If you put a clean input, an example output and a brief on the constraints, the same model will perform like a different species.

Three rules that pay back disproportionately:

- Show, do not tell. One worked example beats five paragraphs of instruction.

- Mind the context window. Beyond a certain size, models start to wander, hallucinate, or quietly forget the earlier instructions. Keep the prompt lean.

- Beware emphatic negatives. “Do NOT delete files” can occasionally have the opposite effect — the model latches onto “delete files”. Phrase as a positive constraint: “Only read files; never modify or remove them.”

Asking for output in a structured format — XML or JSON — makes the result far easier to parse and far harder for the model to fluff. Here is a small PHP example using XML, which we find more forgiving than JSON when the model occasionally adds stray punctuation:

$prompt = <<<TXT

Categorise this customer enquiry. Output ONLY XML, no commentary.

<example>

<category>quote_request</category>

<urgency>high</urgency>

<summary>Wants 50 mugs branded for a wedding next week.</summary>

</example>

Enquiry: {$enquiry}

TXT;

$ch = curl_init('http://localhost:11434/api/generate');

curl_setopt_array($ch, [

CURLOPT_RETURNTRANSFER => true,

CURLOPT_POSTFIELDS => json_encode([

'model' => 'gemma3:4b',

'prompt' => $prompt,

'stream' => false,

]),

CURLOPT_HTTPHEADER => ['Content-Type: application/json'],

]);

$response = json_decode(curl_exec($ch), true)['response'] ?? '';

$xml = simplexml_load_string($response);

$category = (string) $xml->category;

$urgency = (string) $xml->urgency;

Tools and Mid-Inference Execution

Most readers picture an LLM as a one-shot text generator: prompt in, paragraph out. The reality — and the mechanic that makes every modern agent possible — is more interesting. The model is allowed to pause itself mid-sentence, ask the harness to run a tool, and resume once the tool’s result has been pasted back into its context. Inference is a conversation between the model and the surrounding code, not a monologue.

A registered tool is just a small function with a name, a description, and a JSON schema for its arguments. The harness shows that catalogue to the model alongside the user’s prompt. When the model decides it needs a fact it does not have, it emits a structured tool call instead of guessing. The harness executes it, feeds the result back, and inference continues. The model can chain several tool calls in a single response — query the database, call an API, write a file, then summarise — without ever returning control to the user.

This is what separates a chat app from an agent. ChatGPT in a browser can only talk; an agentic harness wired to your filesystem, database, and APIs can do. The same underlying model, dramatically different behaviour. Every workflow described later in this post — the MCP database queries, the batch loops, the report generation — rides on this single mechanic.

The single most powerful tool you can give an agent is the terminal. With shell access, the model can list files, run scripts, query databases, call APIs with curl, install packages, write and execute new tools on the fly, and inspect its own work. Most other tools are special cases of “use the terminal”. This is also why agentic harnesses live in the IDE or the command line rather than the browser — that is where the terminal is.

The MCP (Model Context Protocol) standard, popularised by Anthropic, is simply an agreed-upon way for harnesses to discover and invoke tools that live in separate processes. Once a piece of software exposes an MCP server, any compliant harness can use it without any custom integration code.

Strengths and Weaknesses by File Type

Models are best at the formats they were trained on, which means text, HTML, CSV, Markdown, JSON, well-known programming languages and broadly any well-documented public format. They are noticeably weaker at proprietary binary files — native CAD, PSD, INDD, IDML, raw camera formats — because the training data simply does not exist at the same density.

The workaround is the harness: give the model a CLI or an API for the binary tool, and let it speak plain English while the tool does the actual file work. KiCad, Blender, Adobe InDesign (via ExtendScript) and browser DevTools all expose this kind of bridge. The model becomes a confident director instead of an unreliable file editor.

For the formats that sit between “clean text” and “sealed binary” — spreadsheets, slide decks, Word documents, PDFs, InDesign, and the messy HTML of the open web — Python is the universal solvent. openpyxl reads and writes .xlsx, python-pptx handles PowerPoint, python-docx covers Word, pypdf and pdfplumber extract text and tables from PDFs, BeautifulSoup tames any HTML page into a queryable tree, and there is a small library for almost every other office format including IDML. For semantic retrieval over your own documents — the “chat with my company files” workflow — LlamaIndex wires embeddings, a vector store and the LLM together with about ten lines of code. The harness writes a five-line script using one of these libraries and the file is suddenly readable, editable, and chainable into the rest of the workflow. The practical rule: “if Python has a library for it, your agent can probably handle it”.

Computer Use, in 2026

“Computer use” — letting a model literally drive your mouse and keyboard via screenshots — is real, but in 2026 it is slow, expensive in tokens, and best reserved for short, well-rehearsed sequences. For anything you do more than a handful of times a week, an API or a script is dramatically more reliable. Save computer use for the awkward little jobs where no API exists and the workflow is a few clicks deep.

Software and Website APIs — the Easy Win

If a tool you already use has an API, an agentic harness can usually drive it from plain English. No clicking, no copy-paste. Brightpearl, Xero, Shopify, Mailchimp, Royal Mail, Companies House, Google Search Console — every one of these is a few well-engineered prompts away from being “ask the assistant” rather than “open the app and remember the workflow”.

Two categories of public API quietly transform what an agent knows about the world. Search APIs — Brave Search, Tavily, Serper, Perplexity, Exa — let the model look things up before answering, instead of relying on whatever was in its training data eighteen months ago. Wired into a harness, this is the cure for hallucination in any task that involves current facts: pricing, opening times, recent news, competitor information, the latest version of a software package. Geographic APIs — OpenStreetMap (free), the Overpass API for structured queries, Nominatim for address lookup, Google Maps for the polished commercial alternative — let an agent reason about places, distances, postcodes and routes. “List every furniture shop within five miles of our Maidstone showroom that has a website” goes from a half-day of manual browsing to a thirty-second tool call. The same pattern works for weather, exchange rates, public-holiday calendars, Companies House filings: pick the API once, and every agent on your machine inherits the new sense.

Harnesses that Talk to Your APIs and Database

This is where small businesses see the largest single jump in productivity. An agentic harness with read access to your database, a web API or two, and a clear instruction can answer questions you would previously have raised a ticket for. Better still, it can render the answer — not as a wall of text, but as a clean HTML report with a table and a chart.

A typical stacked instruction looks like this:

1. Connect to MySQL via MCP.

2. Pull every order in the last 30 days.

3. Group by SKU; total units, total revenue, average margin.

4. Cross-reference each SKU against the supplier lead-time table.

5. Render a single self-contained HTML file:

- sortable table

- inline SVG bar chart of top 20 by revenue

- red highlight on rows where projected stock-out is inside lead time

6. Save to ./reports/sales-30d-{date}.html and open it.

Stacking instructions like this turns the harness into a reusable reporting tool. Once it works, you save the prompt as a SKILL.md (more on that below) and run it weekly with a single command.

Stacking the Same Prompt over Large Datasets

The same idea, applied at scale, is how AI starts processing volumes that would otherwise take a person a fortnight. You take one well-engineered prompt — classify, extract, summarise, translate — and stack it over thousands of rows from a database or CSV. The model is no longer a chat partner; it is a batch worker on a production line.

A minimal Python loop — the kind of thing we drop into a client’s scripting folder — looks like this:

import requests, mysql.connector

db = mysql.connector.connect(host='localhost', user='root', database='shop')

cur = db.cursor(dictionary=True)

cur.execute("SELECT id, description FROM products WHERE category IS NULL LIMIT 5000")

for row in cur.fetchall():

prompt = f"""Categorise this product. Output ONLY XML.

<example><category>kitchenware</category></example>

Product: {row['description']}"""

r = requests.post(

'http://localhost:11434/api/generate',

json={'model': 'gemma3:4b', 'prompt': prompt, 'stream': False},

timeout=60,

)

text = r.json().get('response', '')

category = text.split('<category>')[1].split('</category>')[0].strip()

upd = db.cursor()

upd.execute("UPDATE products SET category=%s WHERE id=%s", (category, row['id']))

db.commit()

Five thousand products, categorised overnight, on a laptop, for the cost of the electricity to run the fan. That is the small-business dividend.

What Can a Small Business Actually Automate?

The pattern: anything that is repetitive, lives in well-known file types, and has a clear definition of done. Anything that is one-off, creative, or relationship-driven is still a job for a human — ideally a human now freed up by the automation.

SOPs as SKILL.md and Reusable Tools

The discipline that separates a tinkering sole trader from a properly automated business is writing the standard operating procedures down in a way the harness can read. The convention we use — popularised by Anthropic — is a SKILL.md: a short markdown file describing one task, in the imperative, with examples.

A bare-bones SKILL.md looks like this:

# Generate Weekly Sales Report Use when the user asks for the weekly sales report, weekly numbers, or Monday morning report. ## Steps 1. Run `./tools/sales-report.sh --period=7d`. 2. The tool writes an HTML report to `./reports/`. 3. Email the path to the owner via the email tool. ## Notes - Always include the previous week comparison. - Flag any SKU with sell-through > 90% in red.

That, plus a small shell script that does the actual work, replaces ten pages of Word-document procedure that nobody read. The harness picks up the skill automatically and runs it on demand.

Easy Starting Points: Hardware, OS, Cost

You do not need a server room. The cheapest credible starting point in 2026 is a Mac mini or a MacBook Pro with Apple Silicon and 32–64 GB of unified memory. macOS, MLX, Ollama and LM Studio are by far the smoothest local-AI experience available — LM Studio in particular gives non-developers a one-click way to download and chat with the same models the agentic harnesses use under the hood. NVIDIA hardware is faster per pound for serious training, but it is fiddly to configure and noisy on a desk. Linux sits in between — powerful, free, and a few weekends of setup away from joy.

The less obvious advantage of macOS is everything Apple has built into the OS for the agent to lean on. AppleScript and JavaScript for Automation let a harness drive almost any native app — Mail, Calendar, Numbers, Photos, Finder, even most professional apps like InDesign and Logic — with a few lines of script and no special permissions theatre. Built-in OCR (the Vision framework, exposed through the shortcuts CLI and the Live Text APIs) reads receipts, screenshots and PDFs locally without sending anything to a third-party service. The Accessibility API exposes a structured, computer-readable tree of every window and control on screen, so an agent can click the “Send” button in an app it has never seen by querying for it by role and name — far more reliable than screenshot-based computer use. Stitch all three together and a Mac becomes an unusually friendly host for an agent: native apps, native files, native automation, no APIs required.

For the “clever” work — agentic coding, long reasoning, careful writing — pay for an Anthropic plan and use Claude through Claude Code or Cursor. For everything else, run Gemma or a small Qwen locally via Ollama. Total monthly outlay for a five-person business: roughly the cost of one decent business lunch.

Agent Security and Guardrails

Agents that can write files, send emails and call APIs are wonderful right up until the moment they are not. The single most important habit is to constrain what an agent is allowed to do, rather than trust it to behave. “Yolo mode” — full unsupervised freedom — belongs in a sandbox, never on the production database.

Practical guardrails:

- Use small “dumb” models for tightly scoped tasks; reserve clever models like Claude Opus for jobs that genuinely need reasoning.

- Never expose a free-roaming agent directly to inboxes, customer messaging, or payment systems — always go through a script you wrote.

- Require explicit confirmation for any destructive operation (delete, send, charge).

- Keep a log of every tool call. If something goes wrong, you want to know which step did it.

- Build workflows from your SOPs, not from the agent’s improvisation.

Plain-Language IT and Configuration

A pleasant side benefit of all of this: agentic harnesses are remarkably good at the parts of running a small business that owners traditionally hate. “Set up a virtual host for this new project”, “the printer has stopped responding, look at the logs and tell me why”, “back up the database to an external drive every night at 2am” — all reasonable plain-English requests, all things Claude Code or Cursor can do directly on your machine, with you watching every step.

A Closing Checklist

- Pick one repetitive task that costs you the most time this month.

- Decide if it lives in text-friendly files (yes — you are in business; no — find an API or a CLI bridge).

- Write the SOP in a paragraph, then turn it into a

SKILL.md. - Run it through a clever cloud model first to prove it works; move it to a local model when you scale.

- Constrain the agent. Log everything. Never let it touch production unattended.

- Polish the output until nobody can tell. That is the whole point.

This is the foundation. The next three instalments of the AI Insights for Small Business series go a layer deeper, one piece at a time: writing markdown SOPs your agent will actually follow, folder-based AI workflows with a complete worked example, and harnesses and local computer control. If you would rather skip the reading and have us build it for you, get in touch — we would be happy to help.